Atypica vs Synthetic Research Tools: Strategy vs Testing

Compare atypica’s strategic user research with synthetic testing tools. Learn when to use deep insights for product strategy vs automated testing for usability.

keywords: atypica vs synthetic research, automated testing tools, user research vs usability testing, product strategy research, synthetic users

Atypica vs Synthetic Research Tools: Why Strategy Requires More Than Testing

The Testing Trap

Synthetic research tools (Evidenza, Usight.AI, etc.) automate usability testing—finding where users get stuck, which buttons they miss, what confuses them.

But here’s what testing can’t tell you: Why users want your product. Whether you’re solving the right problem. If your positioning resonates.

Atypica addresses the strategic questions that determine product success. While synthetic tools optimize execution, atypica validates direction—ensuring you’re building the right product, not just building the product right.

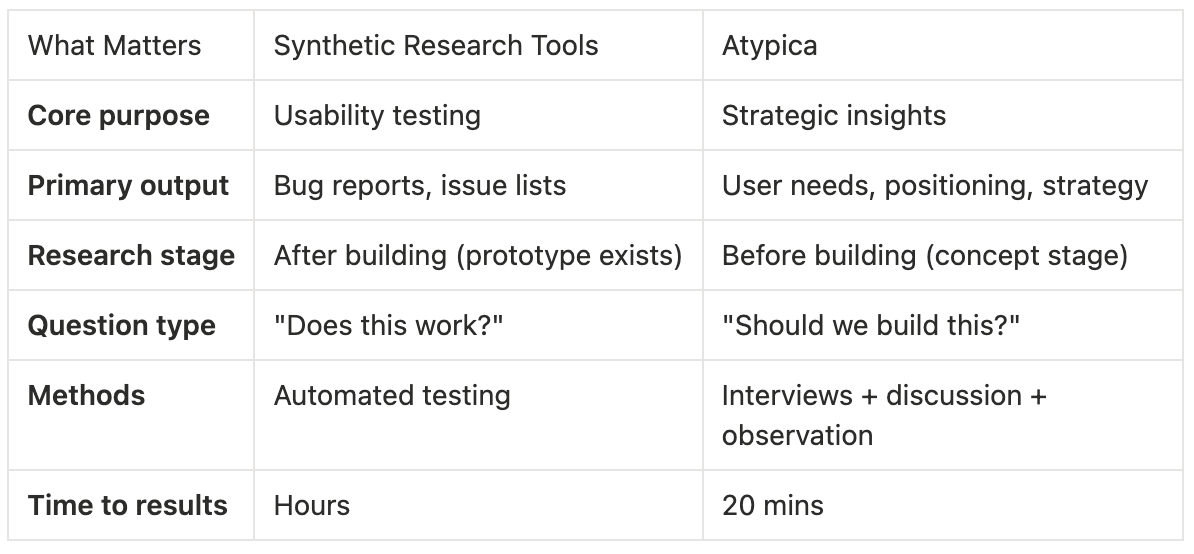

Quick Comparison

The reality: Synthetic tools tell you users got stuck at step 2. Atypica tells you step 2 exists because you misunderstood what users actually need—and what to build instead.

Why Atypica Solves Different Problems

1. Strategic Insights vs Surface Issues

Synthetic tools output:

- Redesign navigation

- Simplify step 2

Make button more prominent

This finds execution problems. But it misses strategic problems.

Atypica research reveals:

Strategic insight: Not a UX problem. A value communication problem.

Recommendation: Demonstrate value before asking for commitment.

The difference: Synthetic tools optimize the wrong solution. Atypica identifies the right problem.

2. Full Lifecycle vs Testing Phase

Synthetic tools work only when:

You have a prototype to test

Features are already built

You’re optimizing existing design

Atypica works throughout:

Concept stage: Should we build this? Which direction?

Pre-launch: What positioning resonates? What price?

Post-launch: Why are users churning? What should we prioritize?

Value: Atypica prevents building the wrong product. Synthetic tools only help once you’ve already invested development resources.

Example: Startup considering three product directions.

Synthetic tools: Can’t help (nothing built yet)

Atypica: 20 mins of research reveals Direction A has weak demand (4/10), Direction B has strong demand (9/10)

Outcome: Avoid 6 months building the wrong product

3. Multi-Method Validation vs Single Approach

Synthetic tools method: Automated testing—users interact with prototype, system logs issues.

Limitation: Only captures what users do, not why they do it. Can’t explore hypotheticals or understand motivations.

Atypica methods:

Interview: Deep conversations revealing motivations

Discussion: Group dynamics showing preference conflicts

Scout: Social media observation capturing unfiltered opinions

webSearch: Competitive and market context

Why this matters: Testing shows 60% of users abandon at step 2. Interviews reveal they’re not failing—they’re choosing to leave because your value proposition doesn’t justify the effort. Different problem, different solution.

What Synthetic Tools Can’t Do

1. Validate Before Building

Synthetic tools need working prototypes. By the time you can test, you’ve already committed development resources.

Atypica validates concepts before any code is written—saving teams from building products users don’t want.

2. Answer Strategic Questions

Testing tools tell you execution issues. They don’t answer:

Is this the right target market?

Which features matter most?

What should we charge?

How should we position against competitors?

Why are power users churning?

Atypica research addresses these questions directly, providing strategic direction that testing can’t offer.

3. Understand Context

A synthetic test might show users struggling with a feature. But it can’t tell you:

They only use that feature because no alternative exists

They’d switch to a simpler product instantly

The feature isn’t the problem—your pricing tier is

Atypica interviews explore context, alternatives, and unspoken needs that testing never captures.

When Synthetic Tools Add Value

Synthetic tools excel at:

Quick usability validation during iteration

A/B testing at scale

Catching obvious UX bugs before launch

Continuous monitoring for regressions

Scenario: Product already validated and built. Now optimizing onboarding flow—testing three button placements and two copy variations.

Synthetic tools efficiently test all combinations and identify the best performing option.

The distinction: Use synthetic tools for optimization. Use atypica for validation.

Combined Use Strategy

Recommended workflow:

Pre-development (Week 1): Atypica research

Development (Week 2-8): Build validated product

Pre-launch (Week 9): Synthetic tool testing

Post-launch (Ongoing): Atypica research

Value: Atypica ensures strategic direction. Synthetic tools polish execution. Together, they cover strategy and tactics.

Real Case: The 5% Conversion Problem

Background: SaaS product with 5% free-to-paid conversion.

Testing-only approach:

- Form completion improved to 80%

- Conversion rate: 5.1%

Minimal impact despite fixing "the issue"

Strategic research approach:

Result: Conversion rate 5% → 18%

The lesson: Synthetic testing optimized a form that wasn’t the problem. Strategic research identified what actually blocked conversion.

Common Questions

Q: Can synthetic tools’ speed replace atypica’s depth?

Speed is valuable for iteration. But fast answers to the wrong questions don’t help.

Synthetic tools quickly tell you users clicked the wrong button. Atypica tells you the button exists because you misunderstood user goals. Different problems requiring different solutions.

Q: Why not use both from the start?

Budget and timing. If you can only invest in one research approach:

Choose atypica first (validates direction, prevents wasted development)

Add synthetic tools later (optimizes execution)

Building the wrong product efficiently still fails. Building the right product with minor UX issues still succeeds.

Q: Are synthetic tools cheaper?

Potentially, depending on volume. But consider total cost:

Building wrong product after synthetic testing: Development cost + testing cost + opportunity cost of wrong direction = $50K-200K lost

Validating direction with atypica first: Subscription cost + saved development on wrong features = $50K-200K saved

The expensive mistake isn’t research cost—it’s building what users don’t want.

The Core Takeaway

Synthetic research tools optimize product execution. Atypica validates product strategy.

Not “which is better”—they address different needs. The question is: “What do you need to know?”

If you need to catch usability bugs in an already-validated product, synthetic tools efficiently find issues.

If you need to validate whether you’re building the right product for the right users at the right price, atypica provides strategic insights testing cannot.

For 90% of product failure, the problem isn’t execution—it’s direction. That’s why strategic research comes first.

Ready to validate your direction? Run atypica research today—ensure you’re building what users want.